Premium Only Content

Typical Decoding for Natural Language Generation (Get more human-like outputs from language models!)

#deeplearning #nlp #sampling

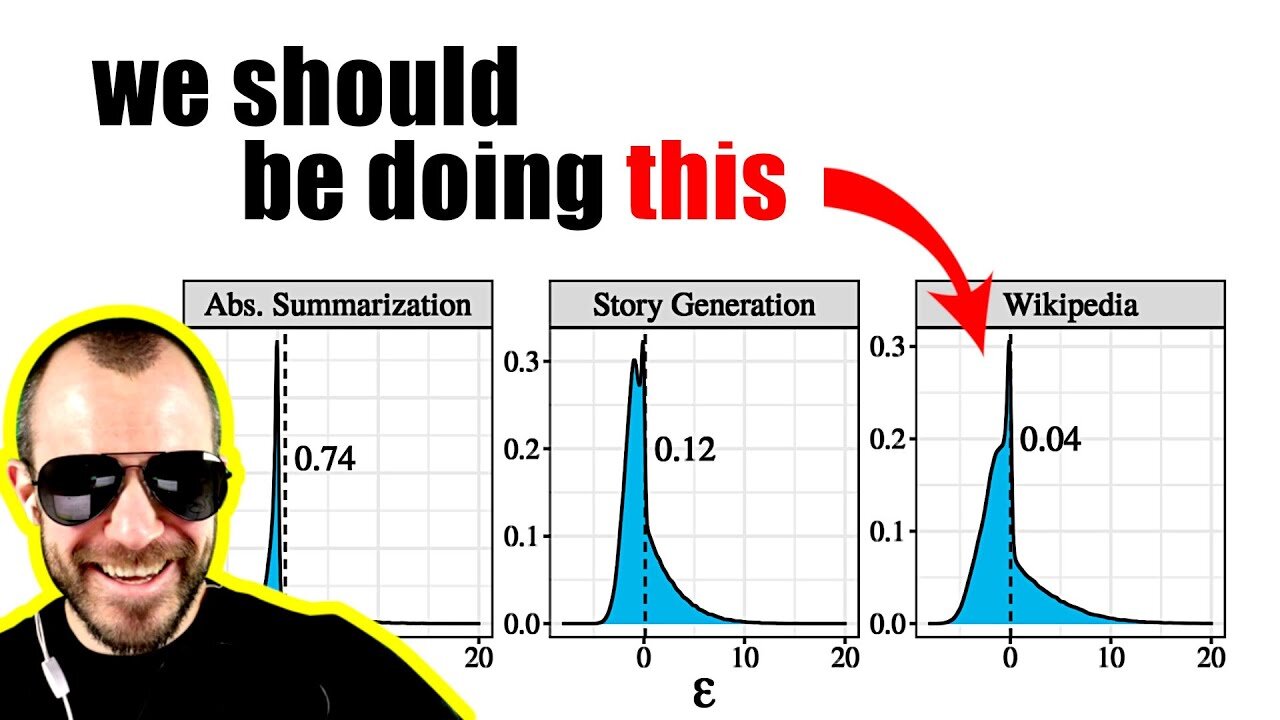

Modern language models like T5 or GPT-3 achieve remarkably low perplexities on both training and validation data, yet when sampling from their output distributions, the generated text often seems dull and uninteresting. Various workarounds have been proposed, such as top-k sampling and nucleus sampling, but while these manage to somewhat improve the generated samples, they are hacky and unfounded. This paper introduces typical sampling, a new decoding method that is principled, effective, and can be implemented efficiently. Typical sampling turns away from sampling purely based on likelihood and explicitly finds a trade-off between generating high-probability samples and generating high-information samples. The paper connects typical sampling to psycholinguistic theories on human speech generation, and shows experimentally that typical sampling achieves much more diverse and interesting results than any of the current methods.

Sponsor: Fully Connected by Weights & Biases

https://wandb.ai/fully-connected

OUTLINE:

0:00 - Intro

1:50 - Sponsor: Fully Connected by Weights & Biases

4:10 - Paper Overview

7:40 - What's the problem with sampling?

11:45 - Beam Search: The good and the bad

14:10 - Top-k and Nucleus Sampling

16:20 - Why the most likely things might not be the best

21:30 - The expected information content of the next word

25:00 - How to trade off information and likelihood

31:25 - Connections to information theory and psycholinguistics

36:40 - Introducing Typical Sampling

43:00 - Experimental Evaluation

44:40 - My thoughts on this paper

Paper: https://arxiv.org/abs/2202.00666

Code: https://github.com/cimeister/typical-...

Abstract:

Despite achieving incredibly low perplexities on myriad natural language corpora, today's language models still often underperform when used to generate text. This dichotomy has puzzled the language generation community for the last few years. In this work, we posit that the abstraction of natural language as a communication channel (à la Shannon, 1948) can provide new insights into the behaviors of probabilistic language generators, e.g., why high-probability texts can be dull or repetitive. Humans use language as a means of communicating information, and do so in a simultaneously efficient and error-minimizing manner; they choose each word in a string with this (perhaps subconscious) goal in mind. We propose that generation from probabilistic models should mimic this behavior. Rather than always choosing words from the high-probability region of the distribution--which have a low Shannon information content--we sample from the set of words with information content close to the conditional entropy of our model, i.e., close to the expected information content. This decision criterion can be realized through a simple and efficient implementation, which we call typical sampling. Automatic and human evaluations show that, in comparison to nucleus and top-k sampling, typical sampling offers competitive performance in terms of quality while consistently reducing the number of degenerate repetitions.

Authors: Clara Meister, Tiago Pimentel, Gian Wiher, Ryan Cotterell

Links:

Merch: store.ykilcher.com

TabNine Code Completion (Referral): http://bit.ly/tabnine-yannick

YouTube: https://www.youtube.com/c/yannickilcher

Twitter: https://twitter.com/ykilcher

Discord: https://discord.gg/4H8xxDF

BitChute: https://www.bitchute.com/channel/yann...

LinkedIn: https://www.linkedin.com/in/ykilcher

BiliBili: https://space.bilibili.com/2017636191

If you want to support me, the best thing to do is to share out the content :)

If you want to support me financially (completely optional and voluntary, but a lot of people have asked for this):

SubscribeStar: https://www.subscribestar.com/yannick...

Patreon: https://www.patreon.com/yannickilcher

Bitcoin (BTC): bc1q49lsw3q325tr58ygf8sudx2dqfguclvngvy2cq

Ethereum (ETH): 0x7ad3513E3B8f66799f507Aa7874b1B0eBC7F85e2

Litecoin (LTC): LQW2TRyKYetVC8WjFkhpPhtpbDM4Vw7r9m

Monero (XMR): 4ACL8AGrEo5hAir8A9CeVrW8pEauWvnp1WnSDZxW7tziCDLhZAGsgzhRQABDnFy8yuM9fWJDviJPHKRjV4FWt19CJZN9D4n

-

6:08:30

6:08:30

Dr Disrespect

18 hours ago🔴LIVE - DR DISRESPECT - ARC RAIDERS - FREE LOADOUT EXPERT

61.3K7 -

2:28:08

2:28:08

PandaSub2000

1 day agoMyst (Part 1) | MIDNIGHT ADVENTURE CLUB (Edited Replay)

27.4K -

21:57

21:57

GritsGG

1 day agoBO7 Warzone Patch Notes! My Thoughts! (Most Wins in 13,000+)

34.7K -

LIVE

LIVE

Lofi Girl

2 years agoSynthwave Radio 🌌 - beats to chill/game to

828 watching -

7:51

7:51

Comedy Dynamics

6 days agoLife on Lake Erie - Bill Squire stand-up comedy

73.3K3 -

5:08:20

5:08:20

FreshandFit

15 hours agoArt Basel IRL Stream

215K18 -

5:21:52

5:21:52

Akademiks

6 hours ago50 cent Declares War on Diddy. Drake #1 streamed artist of 2025. Candace vs TPUSA. YB 19 bodies?

26.9K2 -

4:51:31

4:51:31

Drew Hernandez

1 day agoKASH DENIES FOREIGN INVOLVEMENT IN CHARLIE KIRK MURDER & CANDACE WILLING TO MEET WITH ERIKA KIRK?

38.4K24 -

1:19:49

1:19:49

Adam Does Movies

7 hours ago $24.40 earnedLive Taping! Reviewing Five Nights At Freddy's 2, Marty Supreme, Fackham Hall - Live!

38.3K -

0:43

0:43

Gaming on Rumble

7 hours ago $3.70 earnedLvl UP (Raids)

29.7K