Premium Only Content

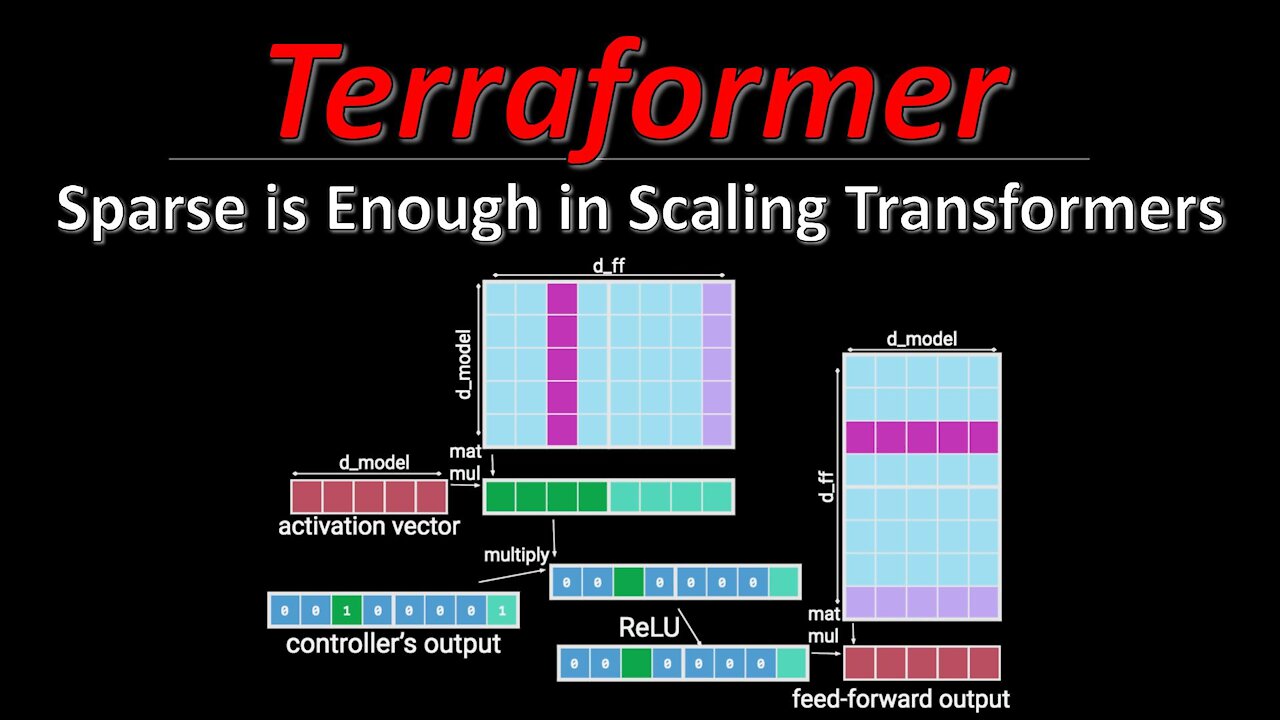

Sparse is Enough in Scaling Transformers (aka Terraformer) | ML Research Paper Explained

#scalingtransformers #terraformer #sparsity

Transformers keep pushing the state of the art in language and other domains, mainly due to their ability to scale to ever more parameters. However, this scaling has made it prohibitively expensive to run a lot of inference requests against a Transformer, both in terms of compute and memory requirements. Scaling Transformers are a new kind of architecture that leverage sparsity in the Transformer blocks to massively speed up inference, and by including additional ideas from other architectures, they create the Terraformer, which is both fast, accurate, and consumes very little memory.

OUTLINE:

0:00 - Intro & Overview

4:10 - Recap: Transformer stack

6:55 - Sparse Feedforward layer

19:20 - Sparse QKV Layer

43:55 - Terraformer architecture

55:05 - Experimental Results & Conclusion

Paper: https://arxiv.org/abs/2111.12763

Code: https://github.com/google/trax/blob/m...

Abstract:

Large Transformer models yield impressive results on many tasks, but are expensive to train, or even fine-tune, and so slow at decoding that their use and study becomes out of reach. We address this problem by leveraging sparsity. We study sparse variants for all layers in the Transformer and propose Scaling Transformers, a family of next generation Transformer models that use sparse layers to scale efficiently and perform unbatched decoding much faster than the standard Transformer as we scale up the model size. Surprisingly, the sparse layers are enough to obtain the same perplexity as the standard Transformer with the same number of parameters. We also integrate with prior sparsity approaches to attention and enable fast inference on long sequences even with limited memory. This results in performance competitive to the state-of-the-art on long text summarization.

Authors: Sebastian Jaszczur, Aakanksha Chowdhery, Afroz Mohiuddin, Łukasz Kaiser, Wojciech Gajewski, Henryk Michalewski, Jonni Kanerva

Links:

TabNine Code Completion (Referral): http://bit.ly/tabnine-yannick

YouTube: https://www.youtube.com/c/yannickilcher

Twitter: https://twitter.com/ykilcher

Discord: https://discord.gg/4H8xxDF

BitChute: https://www.bitchute.com/channel/yann...

LinkedIn: https://www.linkedin.com/in/ykilcher

BiliBili: https://space.bilibili.com/2017636191

If you want to support me, the best thing to do is to share out the content :)

If you want to support me financially (completely optional and voluntary, but a lot of people have asked for this):

SubscribeStar: https://www.subscribestar.com/yannick...

Patreon: https://www.patreon.com/yannickilcher

Bitcoin (BTC): bc1q49lsw3q325tr58ygf8sudx2dqfguclvngvy2cq

Ethereum (ETH): 0x7ad3513E3B8f66799f507Aa7874b1B0eBC7F85e2

Litecoin (LTC): LQW2TRyKYetVC8WjFkhpPhtpbDM4Vw7r9m

Monero (XMR): 4ACL8AGrEo5hAir8A9CeVrW8pEauWvnp1WnSDZxW7tziCDLhZAGsgzhRQABDnFy8yuM9fWJDviJPHKRjV4FWt19CJZN9D4n

-

LIVE

LIVE

ReAnimateHer

1 day agoWes Craven: The Mastermind Who Rewired Horror | Coffee Chat of Horrors

56 watching -

10:23

10:23

Forrest Galante

7 hours agoAsking an Indian Billionaire Why He Is Saving 1 Million Animals

69.7K17 -

LIVE

LIVE

Lofi Girl

3 years agolofi hip hop radio 📚 - beats to relax/study to

177 watching -

6:14

6:14

PistonPop-TV

2 days ago $37.38 earnedThe VW 07K: The Indestructible Five-Cylinder with Lamborghini DNA

32.9K8 -

11:40

11:40

ThinkStory

21 hours agoFRANKENSTEIN Ending Explained!

28K7 -

33:05

33:05

ArturRehi

2 days ago1,000 Shahed Drones Explode at the same time in a BEHEMOTH FIREBALL in Donetsk

34.1K6 -

15:36

15:36

JohnXSantos

1 day ago $3.38 earnedHow To Design A Luxury Clothing Brand With A.I (From 0-$100+)

28.6K -

1:55:13

1:55:13

The Kevin Trudeau Show Limitless

4 days agoHow To Pray To Get Results!

30.9K13 -

1:17:46

1:17:46

Squaring The Circle, A Randall Carlson Podcast

1 day agoRandall Carlson Defines The Younger Dryas

27.7K10 -

40:03

40:03

WanderingWithWine

7 days ago $5.26 earnedBuy a Home for Less Than a Car? 5 Italian Homes for Sale in Puglia

26.2K6