Premium Only Content

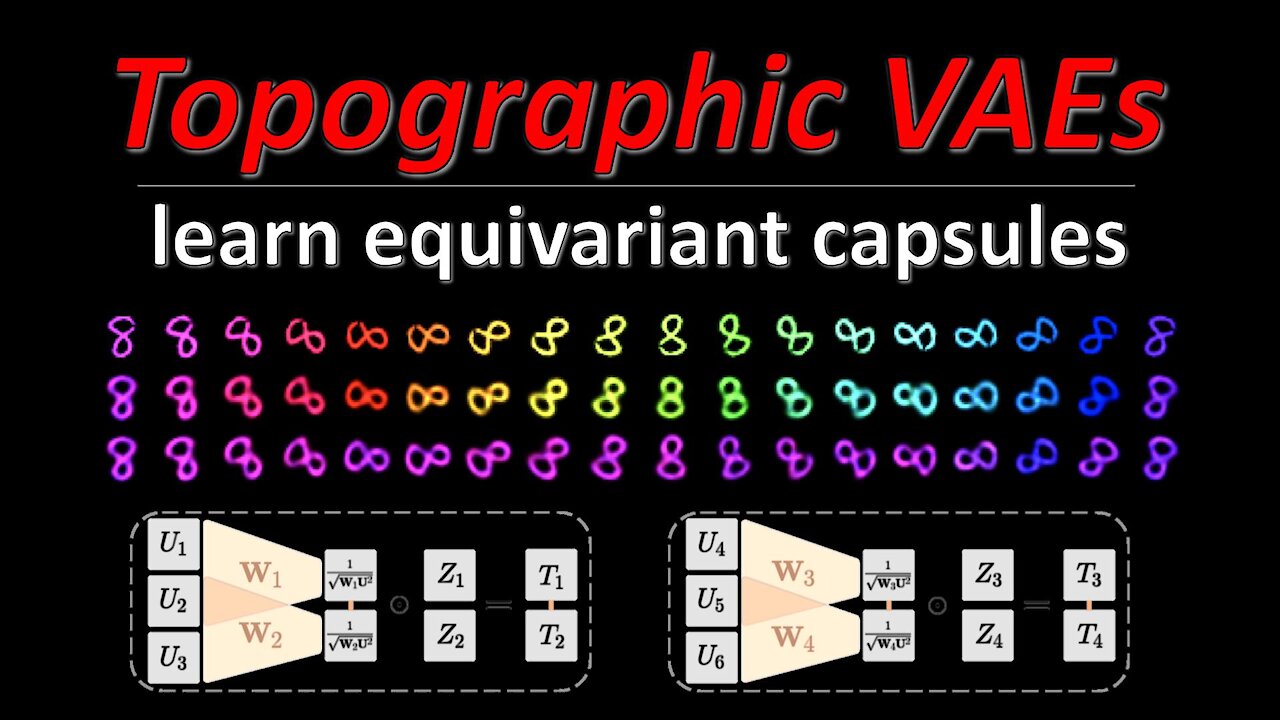

Topographic VAEs learn Equivariant Capsules (Machine Learning Research Paper Explained)

#tvae #topographic #equivariant

Variational Autoencoders model the latent space as a set of independent Gaussian random variables, which the decoder maps to a data distribution. However, this independence is not always desired, for example when dealing with video sequences, we know that successive frames are heavily correlated. Thus, any latent space dealing with such data should reflect this in its structure. Topographic VAEs are a framework for defining correlation structures among the latent variables and induce equivariance within the resulting model. This paper shows how such correlation structures can be built by correctly arranging higher-level variables, which are themselves independent Gaussians.

OUTLINE:

0:00 - Intro

1:40 - Architecture Overview

6:30 - Comparison to regular VAEs

8:35 - Generative Mechanism Formulation

11:45 - Non-Gaussian Latent Space

17:30 - Topographic Product of Student-t

21:15 - Introducing Temporal Coherence

24:50 - Topographic VAE

27:50 - Experimental Results

31:15 - Conclusion & Comments

Paper: https://arxiv.org/abs/2109.01394

Code: https://github.com/akandykeller/topog...

Abstract:

In this work we seek to bridge the concepts of topographic organization and equivariance in neural networks. To accomplish this, we introduce the Topographic VAE: a novel method for efficiently training deep generative models with topographically organized latent variables. We show that such a model indeed learns to organize its activations according to salient characteristics such as digit class, width, and style on MNIST. Furthermore, through topographic organization over time (i.e. temporal coherence), we demonstrate how predefined latent space transformation operators can be encouraged for observed transformed input sequences -- a primitive form of unsupervised learned equivariance. We demonstrate that this model successfully learns sets of approximately equivariant features (i.e. "capsules") directly from sequences and achieves higher likelihood on correspondingly transforming test sequences. Equivariance is verified quantitatively by measuring the approximate commutativity of the inference network and the sequence transformations. Finally, we demonstrate approximate equivariance to complex transformations, expanding upon the capabilities of existing group equivariant neural networks.

Authors: T. Anderson Keller, Max Welling

Links:

TabNine Code Completion (Referral): http://bit.ly/tabnine-yannick

YouTube: https://www.youtube.com/c/yannickilcher

Twitter: https://twitter.com/ykilcher

Discord: https://discord.gg/4H8xxDF

BitChute: https://www.bitchute.com/channel/yann...

Minds: https://www.minds.com/ykilcher

Parler: https://parler.com/profile/YannicKilcher

LinkedIn: https://www.linkedin.com/in/ykilcher

BiliBili: https://space.bilibili.com/1824646584

If you want to support me, the best thing to do is to share out the content :)

If you want to support me financially (completely optional and voluntary, but a lot of people have asked for this):

SubscribeStar: https://www.subscribestar.com/yannick...

Patreon: https://www.patreon.com/yannickilcher

Bitcoin (BTC): bc1q49lsw3q325tr58ygf8sudx2dqfguclvngvy2cq

Ethereum (ETH): 0x7ad3513E3B8f66799f507Aa7874b1B0eBC7F85e2

Litecoin (LTC): LQW2TRyKYetVC8WjFkhpPhtpbDM4Vw7r9m

Monero (XMR): 4ACL8AGrEo5hAir8A9CeVrW8pEauWvnp1WnSDZxW7tziCDLhZAGsgzhRQABDnFy8yuM9fWJDviJPHKRjV4FWt19CJZN9D4n

-

44:19

44:19

ykilcher

3 years agoPonderNet: Learning to Ponder (Machine Learning Research Paper Explained)

93 -

35:29

35:29

ykilcher

3 years agoFastformer: Additive Attention Can Be All You Need (Machine Learning Research Paper Explained)

25 -

36:36

36:36

ykilcher

3 years ago∞-former: Infinite Memory Transformer (aka Infty-Former / Infinity-Former, Research Paper Explained)

27 -

LIVE

LIVE

StoneMountain64

2 hours agoOnly game with BETTER desctruction than Battlefield?

234 watching -

LIVE

LIVE

Viss

4 hours ago🔴LIVE - Viss & Dr Disrespect Take on The 5 Win Minimum PUBG Challenge!

117 watching -

LIVE

LIVE

sophiesnazz

19 minutes agoLETS TALK ABOUT BO7 !socials !specs

73 watching -

DVR

DVR

The Quartering

2 hours agoToday's Breaking News!

59.5K12 -

LIVE

LIVE

GritsGG

6 hours agoWin Streaking! Most Wins 3390+ 🧠

95 watching -

2:20:00

2:20:00

Tucker Carlson

3 hours agoDave Collum: Financial Crisis, Diddy, Energy Weapons, QAnon, and the Deep State’s Digital Evolution

95.8K56 -

1:06:56

1:06:56

Sean Unpaved

18 hours agoSwitch-Hitting Stories: Chipper on Baseball, Football, & the Game's Future

9.28K1