Premium Only Content

Multivariate Regression and Gradient Descent

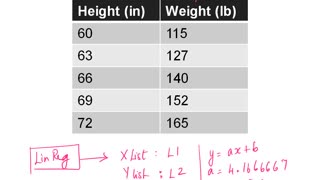

In a previous video, we used linear and logistic regressions as a means of testing the gradient descent algorithm. I was asked to do a video on logistic regression, when I realized the example I used in the gradient descent video was rather complex. Not only is the model more complicated than linear regression, I was using multiple features as well. This would be akin to fitting a plane to 3-d data rather than a line to 2-d in the case of linear regression. In addition there is a lot of matrix manipulation to vectorize the code. In this video, I want to go over that matrix manipulation using the simple case of linear regression and show how by doing this, we can not only get a speed benefit by vectorizing, but also generalize our code to handle multiple parameters with a single gradient function.

Gradient Descent Part 1: https://youtu.be/trvgzYjUr-Y

Gradient Descent Part 2: https://youtu.be/J1ghebX8XGY

Gradient Descent Part 3:https://youtu.be/Twxe59IjHDk

Linear Regression: https://youtu.be/jmKfDvk4k6g

More on Linear Regression: https://youtu.be/UX_b6ZuZLbI

Tip Jar: https://paypal.me/kpmooney

-

18:30

18:30

kpmooney

4 years agoGradient Descent (Part 3)

10 -

1:39:03

1:39:03

Statistics Lectures

4 years ago $0.01 earnedMath10_Lecture_Overview_MAlbert_Ch12_Linear regression and correlation

55 -

0:51

0:51

ViralHog

4 years ago $0.44 earnedDescent Looks a Little Rocky

8.66K1 -

18:51

18:51

kpmooney

4 years ago $0.01 earnedAn Introduction to Logistic Regression

41 -

2:34

2:34

kshingleton

5 years agoLauterbrunnen Cattle Descent

36 -

9:59

9:59

kpmooney

4 years agoMore on Linear Regression

30 -

0:33

0:33

mijobehr

4 years agoMoon Descent Time Lapse

111 -

17:21

17:21

kpmooney

4 years agoLinear Regression in Python: Finding a Stock's Beta Coefficient

34 -

9:31

9:31

Trails and Road rides and Cross Training Adventures

5 years ago $0.01 earnedColorado Trail descent from Muggins Gulch

2262 -

31:02

31:02

Spencer Rayne

5 years agoEps 2 - Amnesia: A Dark Descent Playthrough

35