Premium Only Content

ICYMI - Will Wilson, co-founder of Antithesis, an AI contractor for Palantir

ICYMI - Will Wilson,

co-founder of Antithesis,

an AI contractor for Palantir:

+ PETITION

🔤🔤🔤🔤🔤🔤🔤🔤🔤🔤

“It's very possible that we're entering a world where very soon

any kind of cognitive labor, any kind of reason, any kind of thought...

It'll be a thing that weirdos do."

Read more:

https://www.disclose.tv/id/uiptylubfw/

🔤🔤🔤🔤🔤🔤🔤🔤🔤🔤

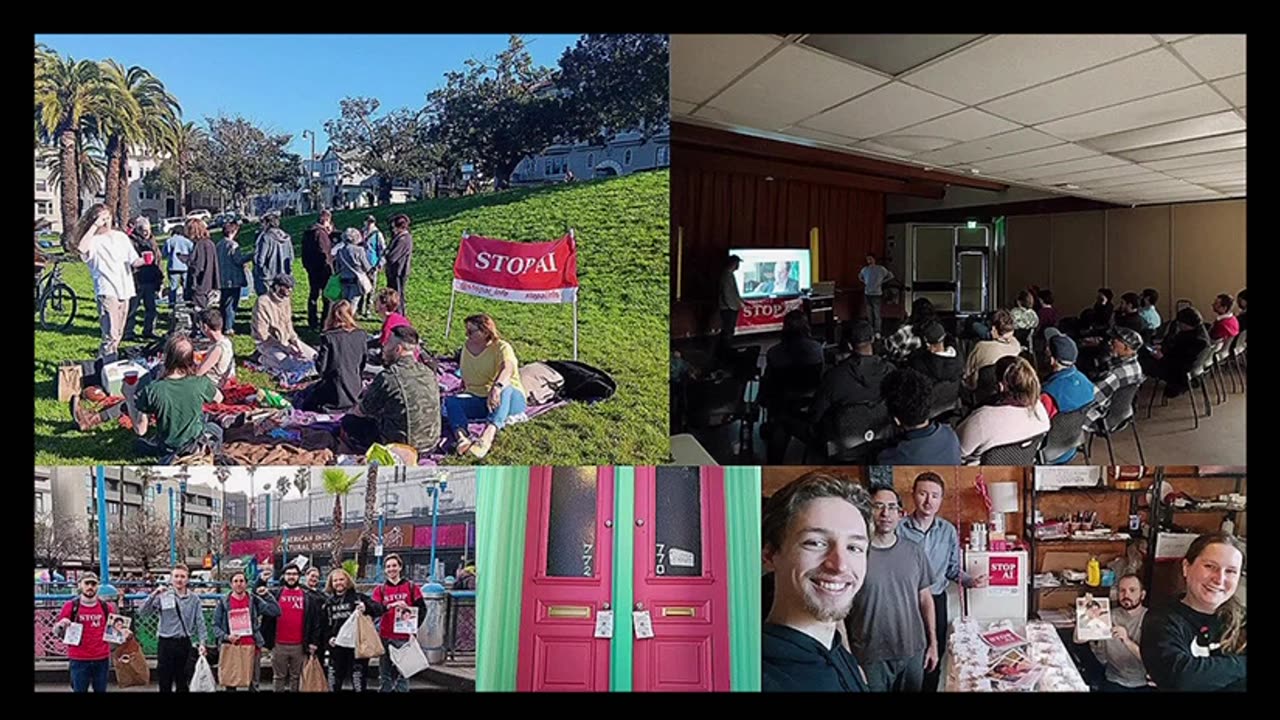

STOP AI

https://www.stopai.info/

🔤🔤🔤🔤🔤🔤🔤🔤🔤🔤

PETITION:

only 33795 Signature so far

as of 22 March, 2023

Pause Giant AI Experiments: An Open Letter

We call on all AI labs to immediately pause for at least 6 months the training of AI systems more powerful than GPT-4.

FULL LETTER AVAILABLE BY CLICKING ON THE LINK BELOW

https://futureoflife.org/open-letter/pause-giant-ai-experiments/

Abstract :

AI systems with human-competitive intelligence can pose profound risks to society and humanity, as shown by extensive research[1] and acknowledged by top AI labs.[2] As stated in the widely-endorsed Asilomar AI Principles, Advanced AI could represent a profound change in the history of life on Earth, and should be planned for and managed with commensurate care and resources. Unfortunately, this level of planning and management is not happening, even though recent months have seen AI labs locked in an out-of-control race to develop and deploy ever more powerful digital minds that no one – not even their creators – can understand, predict, or reliably control.

Contemporary AI systems are now becoming human-competitive at general tasks,[3] and we must ask ourselves: Should we let machines flood our information channels with propaganda and untruth? Should we automate away all the jobs, including the fulfilling ones? Should we develop nonhuman minds that might eventually outnumber, outsmart, obsolete and replace us? Should we risk loss of control of our civilization? Such decisions must not be delegated to unelected tech leaders. Powerful AI systems should be developed only once we are confident that their effects will be positive and their risks will be manageable. This confidence must be well justified and increase with the magnitude of a system’s potential effects. OpenAI’s recent statement regarding artificial general intelligence, states that “At some point, it may be important to get independent review before starting to train future systems, and for the most advanced efforts to agree to limit the rate of growth of compute used for creating new models.” We agree. That point is now.

Therefore, we call on all AI labs to immediately pause for at least 6 months the training of AI systems more powerful than GPT-4.

This pause should be public and verifiable, and include all key actors. If such a pause cannot be enacted quickly, governments should step in and institute a moratorium.

AI labs and independent experts should use this pause to jointly develop and implement a set of shared safety protocols for advanced AI design and development that are rigorously audited and overseen by independent outside experts. These protocols should ensure that systems adhering to them are safe beyond a reasonable doubt.[4] This does not mean a pause on AI development in general, merely a stepping back from the dangerous race to ever-larger unpredictable black-box models with emergent capabilities.

AI research and development should be refocused on making today’s powerful, state-of-the-art systems more accurate, safe, interpretable, transparent, robust, aligned, trustworthy, and loyal.

We have prepared some FAQs in response to questions and discussion in the media and elsewhere. You can find them here.

In addition to this open letter, we have published a set of policy recommendations which can be found here:

Policymaking in the Pause

12th April 2023

View paper

This open letter is available in French, Arabic, and Brazilian Portuguese. You can also download this open letter as a PDF.

🔤🔤🔤🔤🔤🔤🔤🔤🔤🔤

Compilation and captions :

https://t.me/IdiocracyfortheDummies/7306

-

![Mr & Mrs X - Feminism, Family, Federal Reserve, The Rise Of The [DS] Agenda](https://1a-1791.com/video/fwe2/12/s8/1/6/F/R/n/6FRnz.0kob-small-Mr-and-Mrs-X-Feminism-Famil.jpg) 58:10

58:10

X22 Report

4 hours agoMr & Mrs X - Feminism, Family, Federal Reserve, The Rise Of The [DS] Agenda

24.9K6 -

31:27

31:27

Stephen Gardner

15 hours ago🔥BOMBSHELL: Mortician EXPOSES Charlie Kirk Autopsy - The Key Evidence EVERYONE Missed!

80.9K171 -

36:53

36:53

daniellesmithab

3 days agoSupporting Alberta's Teachers and Students

92K19 -

LIVE

LIVE

FyrBorne

13 hours ago🔴Warzone/Black Ops 7 M&K Sniping: From the Zone to Zombs

299 watching -

LIVE

LIVE

blackfox87

3 hours ago🟢 SUBATHON DAY 3 | Premium Creator | #DisabledVeteran

125 watching -

14:38

14:38

Nikko Ortiz

19 hours agoADHD vs Autism

54.1K36 -

LIVE

LIVE

EXPBLESS

3 hours agoArena Breakout (This Game Is Hard But Fun) How Much Can We Make Today? #RumbleGaming

147 watching -

4:40

4:40

GritsGG

18 hours agoTwo Easter Eggs on Call of Duty Warzone!

44.2K4 -

2:08:19

2:08:19

Side Scrollers Podcast

1 day agoNetflix Execs to TESTIFY Over LGBTQ Agenda + IGN DESTROYS Xbox Game Pass + More | Side Scrollers

79.6K25 -

5:08:55

5:08:55

Dr Disrespect

23 hours ago🔴LIVE - DR DISRESPECT - BABY STEPS - THE VERY VERY LAST CHAPTER

159K19