Premium Only Content

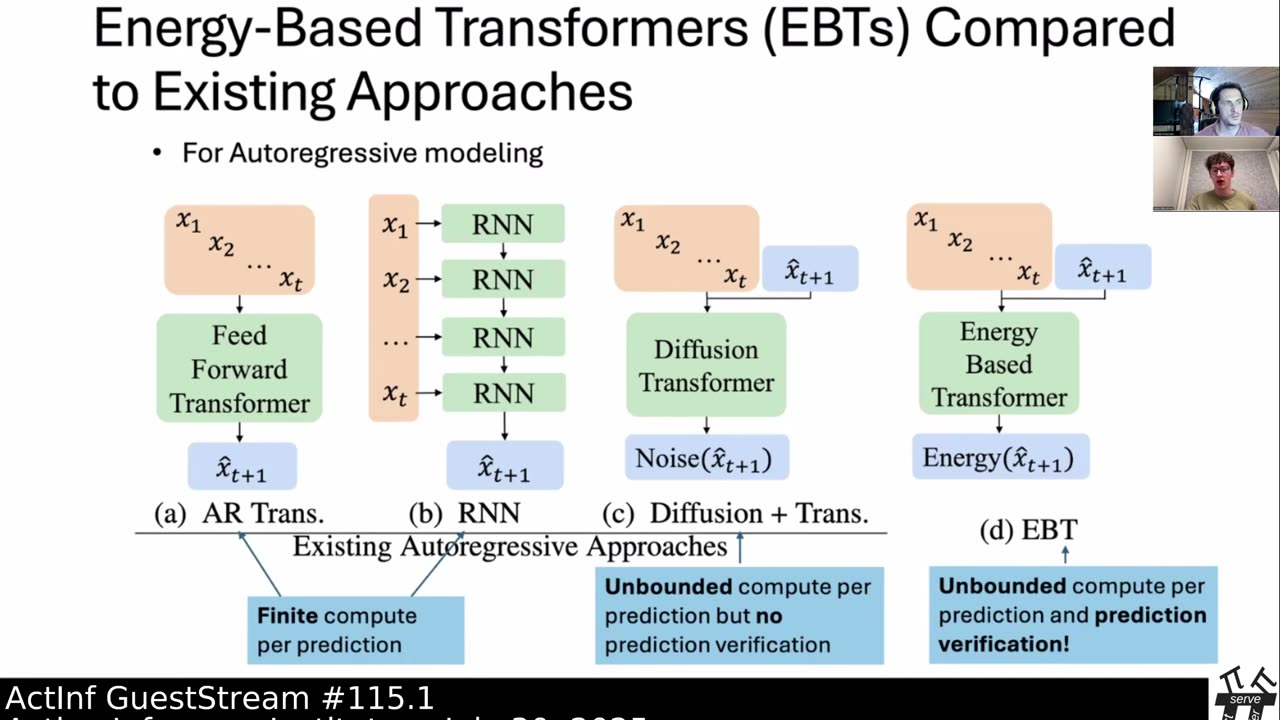

ActInf GuestStream 115.1 ~ Energy-Based Transformers and the Future of Scaling

"Energy-Based Transformers are Scalable Learners and Thinkers"

Alexi Gladstone, Ganesh Nanduru, Md Mofijul Islam, Peixuan Han, Hyeonjeong Ha, Aman Chadha, Yilun Du, Heng Ji, Jundong Li, Tariq Iqbal

https://arxiv.org/abs/2507.02092

Inference-time computation techniques, analogous to human System 2 Thinking, have recently become popular for improving model performances. However, most existing approaches suffer from several limitations: they are modality-specific (e.g., working only in text), problem-specific (e.g., verifiable domains like math and coding), or require additional supervision/training on top of unsupervised pretraining (e.g., verifiers or verifiable rewards). In this paper, we ask the question "Is it possible to generalize these System 2 Thinking approaches, and develop models that learn to think solely from unsupervised learning?" Interestingly, we find the answer is yes, by learning to explicitly verify the compatibility between inputs and candidate-predictions, and then re-framing prediction problems as optimization with respect to this verifier. Specifically, we train Energy-Based Transformers (EBTs) -- a new class of Energy-Based Models (EBMs) -- to assign an energy value to every input and candidate-prediction pair, enabling predictions through gradient descent-based energy minimization until convergence. Across both discrete (text) and continuous (visual) modalities, we find EBTs scale faster than the dominant Transformer++ approach during training, achieving an up to 35% higher scaling rate with respect to data, batch size, parameters, FLOPs, and depth. During inference, EBTs improve performance with System 2 Thinking by 29% more than the Transformer++ on language tasks, and EBTs outperform Diffusion Transformers on image denoising while using fewer forward passes. Further, we find that EBTs achieve better results than existing models on most downstream tasks given the same or worse pretraining performance, suggesting that EBTs generalize better than existing approaches. Consequently, EBTs are a promising new paradigm for scaling both the learning and thinking capabilities of models.

Subjects: Machine Learning (cs.LG); Artificial Intelligence (cs.AI); Computation and Language (cs.CL); Computer Vision and Pattern Recognition (cs.CV)

Cite as: arXiv:2507.02092 [cs.LG]

(or arXiv:2507.02092v1 [cs.LG] for this version)

https://doi.org/10.48550/arXiv.2507.02092

Active Inference Institute information:

Website: https://www.activeinference.institute/

Activities: https://activities.activeinference.institute/

Discord: https://discord.activeinference.institute/

Donate: http://donate.activeinference.institute/

YouTube: https://www.youtube.com/c/ActiveInference/

X: https://x.com/InferenceActive

Active Inference Livestreams: https://video.activeinference.institute/

-

1:08:49

1:08:49

Active Inference Institute

22 days agoActInf GuestStream 082.6 ~ Robert Worden "A Unified Theory of Language"

5 -

LIVE

LIVE

vivafrei

6 hours agoLive w/ Stanislav Krapivnik - Military and Political Analyst on Russia, Europe & Beyond!

1,280 watching -

LIVE

LIVE

Dr Disrespect

5 hours ago🔴LIVE - DR DISRESPECT - ARC RAIDERS - AGAINST ALL DANGER

2,061 watching -

1:40:36

1:40:36

The Quartering

2 hours agoKimmel Pulls Show Mysteriously, Youtube Collapse? & Much MOre

33.6K29 -

LIVE

LIVE

The Robert Scott Bell Show

2 hours agoMike Adams, Brian Hooker, Live From Brighteon Studios in Austin Texas, Kids Triple Vaccinated, Blood Sugar and Autism, Candy Fed to Cows, Nutrition Reform - The RSB Show 11-7-25

132 watching -

1:15:58

1:15:58

DeVory Darkins

3 hours agoLIVE NOW: Democrats SABOTAGE GOP effort to reopen Government

74.8K44 -

1:21:21

1:21:21

Tucker Carlson

2 hours agoThe Global War on Christianity Just Got a Whole Lot Worse, and Ted Cruz Doesn’t Care

24.3K149 -

10:50

10:50

Dr. Nick Zyrowski

2 days agoDoctors Got It Wrong! This LOWERS CORTISOL In Minutes!

2.97K3 -

24:14

24:14

Verified Investing

2 days agoBiggest Trade As AI Bubble Begins To Burst, Bitcoin Flushes Through 100K And Gold Set To Fall

1.7K -

1:12:28

1:12:28

Sean Unpaved

2 hours agoAB's Dubai Drama: Extradited & Exposed + NFL Week 10 Locks & CFB Week 11 Upsets

19.7K