Premium Only Content

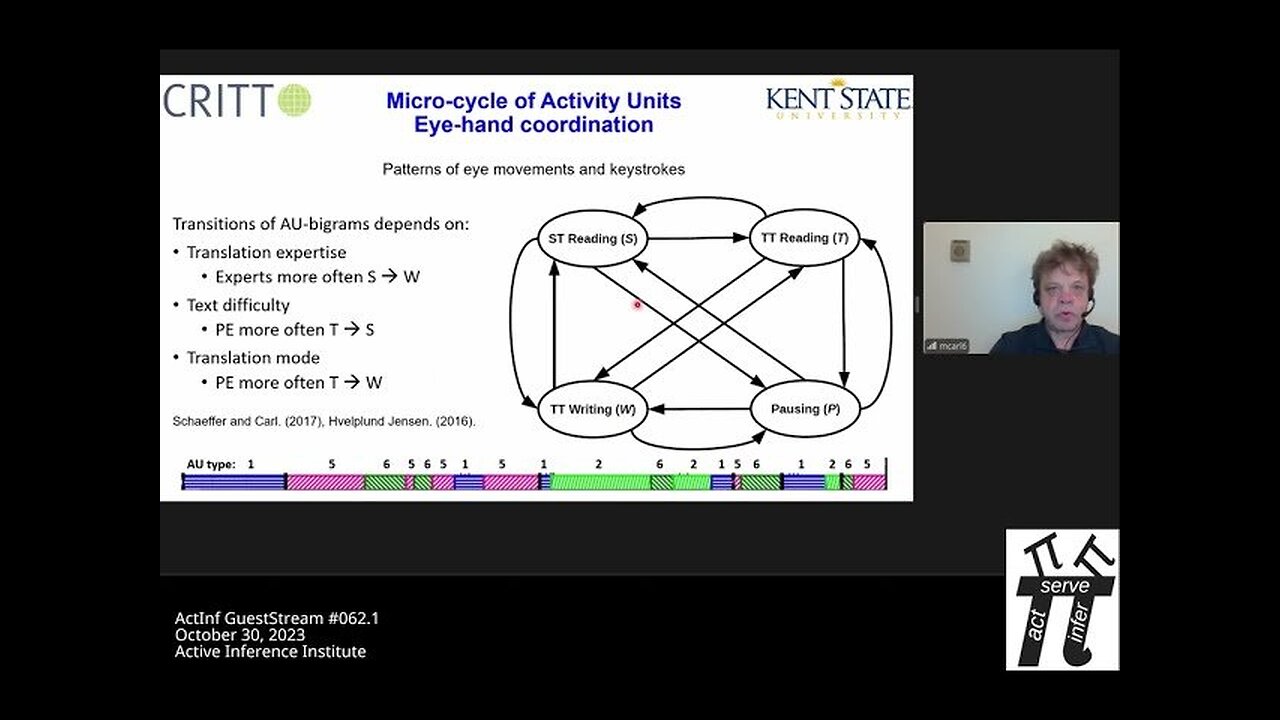

ActInf GuestStream 062.1 ~ Michael Carl, "Deep temporal Models of the Translation Process"

Michael Carl "Deep temporal Models of the Translation Process"

https://scholar.google.com/citations?... Abstract: Translation Process

Research (TPR) investigates how humans produce translations from one language

into another. Several methods have been used to gather evidence for the

assumed underlying translation processes, including think-aloud,

(retrospective) interviews, questionnaires, screen recording, brain image

technologies (EEG, fMRI), etc. In our research, we use keyloggers and

eyetrackers to collect behavioral data during the translation sessions. The

two data streams are synchronized to establish correspondences between the

sensory input (reading) and translational action (typing) and analyzed to

arrive -- among other things -- at a better understanding of the relations

between translation effort and effects, which heavily depend on the

translator's expertise, text difficulty, expected translation quality, etc.

Numerous models of the translating mind have been suggested, some of which

suggest that multiple, more or less automatized and/or conscious translation

processes complement each other during translation production. In this talk I

suggest the Free Energy Principle (FEP) and Active Inference (AIF) as a novel

framework for analyzing, specifying and modelling deep embedded translation

processes and for simulating their interaction within the POMDP framework. I

exemplify how translation-behavioral data (keystrokes and gaze data) elicit

traces of a deep temporal architecture, in which human translation production

is modelled in several temporally embedded and interacting processes that

unfold on different timelines. Following FEP/AIF, the deep temporal

architecture allows translators to arrive at a steady state of fluent

translation production by minimizing the discrepancy between the translator's

internal states and the (textual) states in the external translation

environment. Active Inference Institute information: Website:

https://activeinference.org/ Twitter: / inferenceactive Discord: / discord

YouTube: / activeinference Active Inference Livestreams:

https://coda.io/@active-inference-ins...

CSID: b0969429d1b58abb

Content Managed by ContentSafe.co

-

10:26:08

10:26:08

Active Inference Institute

10 days ago5th Applied Active Inference Symposium (Part 3, Nov 14, 2025) ~ LIVE

9 -

1:13:26

1:13:26

Steven Crowder

3 hours ago🔴 Jay Dyer on Hollywood, The Occult, and the Attack on the American Soul

71.5K39 -

29:07

29:07

The Rubin Report

2 hours agoAre Megyn Kelly & Erika Kirk Right About Our Political Divisions?

8.34K22 -

27:09

27:09

VINCE

3 hours agoSaving America's Schools with Norton Rainey | Episode 177 - 11/26/25 VINCE

95.8K63 -

2:03:57

2:03:57

Benny Johnson

2 hours agoFBI Director Kash Patel Makes January 6th Pipe Bomber Announcement: Massive Breakthrough, Stay Tuned

40K24 -

1:06:17

1:06:17

Graham Allen

4 hours agoFAKE NEWS Is Everywhere!! Are We Living In The Upside Down?!

115K434 -

2:59:36

2:59:36

Wendy Bell Radio

7 hours agoFeeding Their Greed

46.1K77 -

1:55:12

1:55:12

Badlands Media

9 hours agoBadlands Daily: November 26, 2025

31.9K6 -

1:13:11

1:13:11

Chad Prather

18 hours agoGratitude That Grows in Hard Ground: A Thanksgiving Message for the Soul

68.4K42 -

LIVE

LIVE

LFA TV

14 hours agoLIVE & BREAKING NEWS! | WEDNESDAY 11/26/25

3,882 watching