Premium Only Content

This video is only available to Rumble Premium subscribers. Subscribe to

enjoy exclusive content and ad-free viewing.

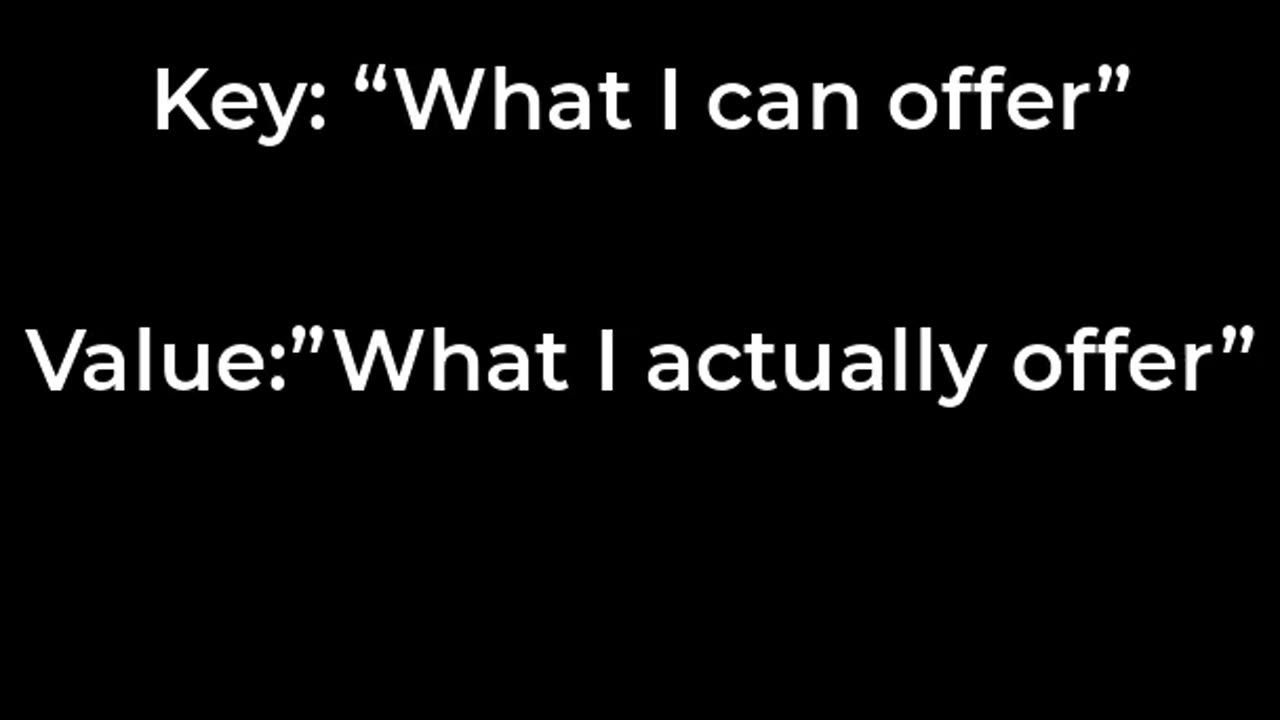

Understanding Query, Key and Value Vectors in Transformer Networks

2 years ago

44

This video provides an explanation of query, key and value vectors, which are an essential part of the attention mechanism used in transformer neural networks. Transformers use multi-headed attention to learn contextual relationships between input and output sequences. The attention mechanism calculates the relevance of one element to another based on query and key vectors. The value vectors then provide contextual information for the relevant elements. Understanding how query, key and value vectors work can help in designing and optimizing transformer models for various natural language processing and computer vision tasks.

Loading comments...

-

31:07

31:07

Camhigby

3 days agoLeftist Claims Gender Goes By Identity, Then FLOUNDERS When Asked This Question!

9.78K4 -

16:38

16:38

MetatronGaming

11 hours agoAnno 117 Pax Romana looks INCREDIBLE

65.2K6 -

9:26

9:26

MattMorseTV

1 day ago $20.90 earnedPam Bondi is in HOT WATER.

18.6K148 -

13:46

13:46

Nikko Ortiz

13 hours agoYour Humor Might Be Broken...

17.5K2 -

2:20:13

2:20:13

Side Scrollers Podcast

18 hours agoVoice Actor VIRTUE SIGNAL at Award Show + Craig’s HORRIBLE Take + More | Side Scrollers

49.4K13 -

18:49

18:49

GritsGG

14 hours agoI Was Given a Warzone Sniper Challenge! Here is What Happened!

10K -

19:02

19:02

The Pascal Show

1 day ago $1.74 earnedNOT SURPRISED! Pam Bondi Is Lying To Us Again About Releasing The Epstein Files

9.9K6 -

6:05

6:05

Blabbering Collector

18 hours agoRowling On Set, Bill Nighy To Join Cast, HBO Head Comments On Season 2 Of Harry Potter HBO!

12.4K3 -

57:44

57:44

TruthStream with Joe and Scott

2 days agoShe's of Love podcast & Joe:A co-Hosted interview, Mother and Daughter (300,000+Facebook page) Travel, Home School, Staying Grounded, Recreating oneself, SolarPunk #514

27.9K1 -

30:49

30:49

MetatronHistory

1 day agoThe Truth about Women Warriors Based on Facts, Evidence and Sources

28.3K12