Translating Files in LibreTranslate Tutorial

Translating files in LibreTranslate

1. Navigate to LibreTranslate instance in web browser, for example libretranslate.com

2. Click on the “Translate Files” tab at the top of the page

3. Click the “File” button to upload your file

4. Click “Translate” to translate your document

5. Click “Download” to download your translated document

5

views

Argos AI Adventure Coding Livestream

A fantasy themed text based adventure game written with GitHub Copilot

The goal of this project is to generate code using GitHub Copilot, a code generation language model, with human help for oversight and high level direction. The thinking is that "Quantity has a Quality of its own" and even if the code generated by GitHub CoPilot is low quality it can be generated at a relatively low cost.

https://github.com/argosopentech/argos-ai-adventure

7

views

0 AD Gameplay

“0 A.D. (pronounced “zero-ey-dee”) is a free, open-source, historical Real Time Strategy (RTS) game currently under development by Wildfire Games, a global group of volunteer game developers. As the leader of an ancient civilization, you must gather the resources you need to raise a military force and dominate your enemies.”

https://play0ad.com/

3

views

Live Coding an AI to Play Minecraft

Live Coding an AI to Play Minecraft

- Run open source Minecraft clone Craft

- Record and process simulated play data

- Train a Vision Transformer neural network to control the keyboard

Using Pytorch, Python, and a Linux Desktop

2

views

Live Coding an AI to Play Minecraft part 2 of 2

Live Coding an AI to Play Minecraft part 2

- Run open source Minecraft clone Craft

- Record and process simulated play data

- Train a Vision Transformer neural network to control the keyboard

Using Pytorch, Python, and a Linux Desktop

1

view

Can we build open source Minecraft?

In this video we discuss the need for an open-source Minecraft implementation, how trademark and copyright law works, and the current state of open source Minecraft.

Creative Commons CC0

3

views

Open Source Language Models with Eleuther AI

Testing Eleuther AI’s open source large language models.

Eleuther AI: https://www.eleuther.ai/

20B Demo: https://20b.eleuther.ai/

Creative Commons CC0

1

view

Technical Explanation of IPFS

A technical explanation of IPFS covering Merkle DAGs, distributed hash tables, and a comparison to BitTorrent

3

views

The Unix Philosophy

The Unix Philosophy

The Unix Philosophy is a system design philosophy of using minimalist composable components.

As a software development style the Unix Philosophy advocates building small tools that communicate over a universal interface to create larger systems. Higher level functionality then emerges from the combination of these composable tools instead of from a monolithic program.

Formulations

Peter Salus

Write programs that do one thing and do it well.

Write programs to work together.

Write programs to handle text streams, because that is a universal interface.

Doug Mcllroy

Make each program do one thing well. To do a new job, build afresh rather than complicate old programs by adding new "features".

Expect the output of every program to become the input to another, as yet unknown, program. Don't clutter output with extraneous information. Avoid stringently columnar or binary input formats. Don't insist on interactive input.

Design and build software, even operating systems, to be tried early, ideally within weeks. Don't hesitate to throw away the clumsy parts and rebuild them.

Use tools in preference to unskilled help to lighten a programming task, even if you have to detour to build the tools and expect to throw some of them out after you've finished using them.

This is the Unix philosophy: Write programs that do one thing and do it well. Write programs to work together. Write programs to handle text streams, because that is a universal interface.

The notion of "intricate and beautiful complexities" is almost an oxymoron. Unix programmers vie with each other for "simple and beautiful" honors — a point that's implicit in these rules, but is well worth making overt.

"The Unix Programming Environment" - Brian Kernighan and Rob Pike

Even though the UNIX system introduces a number of innovative programs and techniques, no single program or idea makes it work well. Instead, what makes it effective is the approach to programming, a philosophy of using the computer. Although that philosophy can't be written down in a single sentence, at its heart is the idea that the power of a system comes more from the relationships among programs than from the programs themselves. Many UNIX programs do quite trivial things in isolation, but, combined with other programs, become general and useful tools.

"Program Design in the UNIX Environment" - Brian Kernighan

Much of the power of the UNIX operating system comes from a style of program design that makes programs easy to use and, more important, easy to combine with other programs. This style has been called the use of software tools, and depends more on how the programs fit into the programming environment and how they can be used with other programs than on how they are designed internally. [...] This style was based on the use of tools: using programs separately or in combination to get a job done, rather than doing it by hand, by monolithic self-sufficient subsystems, or by special-purpose, one-time programs.

Do One Thing and Do It Well

The central idea of the Unix Philosophy is to build composable components that do one thing well. Small minimalist components are easier to understand and modify which allows for faster experimentation. New features are added by connecting to existing components or creating a new component. Higher order functionality then emerges from the connections between components.

In Unix style development developers build tools to solve problems instead of using outside help. The limiting factor in software development is typically developer understanding. When modifying a system a developer often spend most of their time trying to understand the system, and a small amount of time making the relevant change. By scaling a single developer with tools they understand communication and understanding burdens are decreased. The volume of internal communication scales O(n3) with the number of developers while their surface area of useful work with the outside world scales O(n2). The modularity and composibility of the Unix Philosophy increases what individual developers can accomplish and makes it easier for developers to share code and work in parallel.

Everything is a File

Unix operating systems are based around files. Data is stored in files, configuration is done with files, logs are saved as files, and OS services like input output and communication with other processes is exposed through a file interface.

Examples

This is an example of reading and searching a log file with Unix tools.

$ cat logs.txt

info: Startup

info: Load data

info: Processing

error: Divide by zero line 42

warning: Calculation failed

info: Exiting

$ grep error logs.txt

error: Divide by zero line 42

Possible Improvements

The Unix Philosophy has traditionally been based around the universal text interface which works well but connecting components with a scripting language or structured data format has many advantages.

A disadvantage of the text interface is that it is difficult to pass data structures or advanced functionality between components which can be done easily using scripting languages like Python and data formats like JSON.

For example, if a programmer wanted to pass a list of dictionaries between two components through a text interface it would require complex parsing. However, this is easily accomplished by passing a JSON object.

[

{

"P.J.": 10,

"Brian": 6

},

{

"Peter": 8

}

]

71

views

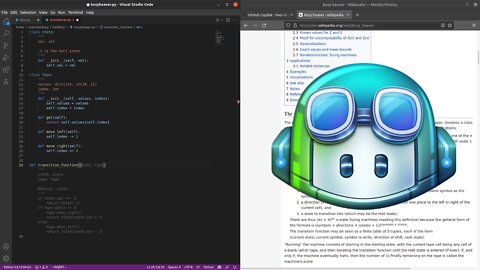

Busy Beaver with Github Copilot

Building a busy beaver implementation using Github CoPilot: https://gist.github.com/PJ-Finlay/8406f7f7f8d1d53cfc542ef1c4d4a7df

6

views

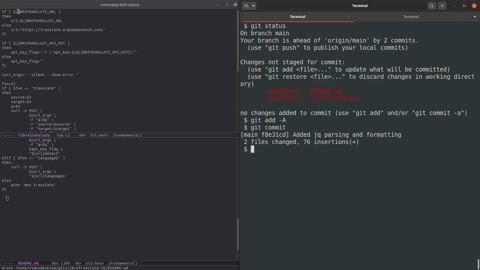

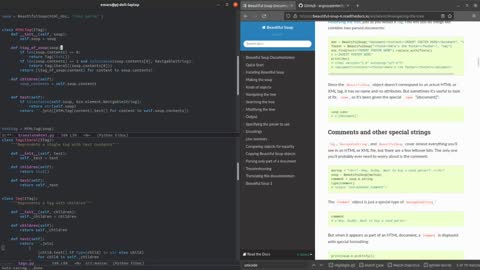

HTML translation with Beautiful Soup and Argos Translate

Translating tags at inference with tag injection in Argos Translate.

Links:

https://github.com/argosopentech/argos-translate

https://github.com/argosopentech/translate-html

https://github.com/argosopentech/argos-translate/commit/56db272d775794d7fac2c0ae547100a899dc9067

https://www.argosopentech.com

https://forum.opennmt.net/t/suggestions-for-translating-xml/4409/6

https://github.com/argosopentech/argos-translate/discussions/100

Creative Commons CC0

2

views

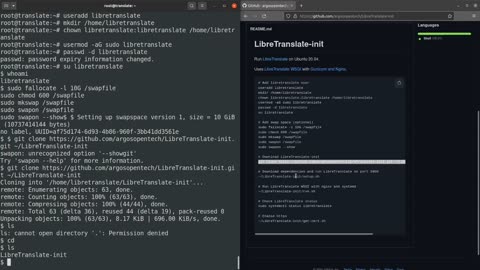

Installing LibreTranslate on Ubuntu with LibreTranslate-init

Run LibreTranslate on Ubuntu 20.04.

Uses LibreTranslate WSGI with Gunicorn and Nginx.

LibreTranslate-init: https://github.com/argosopentech/LibreTranslate-init/

Creative Commons CC0

3

views

Machine Translation in Argos Translate (2021)

Argos Translate: https://github.com/argosopentech/argos-translate

Argos Open Tech: https://www.argosopentech.com

LIbreTranslate Demo: https://libretranslate.com

Machine translation in Argos Translate, an open source Python library and Desktop application for doing neural machine translation.

Covers the sequence to sequence Transformer model, inference, data, data processing, tokenization, sentence boundary detection, package management, peer to peer distribution, web app, desktop application, API, and language bindings for Rust C# and NodeJS.

In this video we will be discussing machine translation in Argos Translate, an open source Python library and Desktop application for doing neural machine translation.

The heart of a modern machine translation system is a neural network that does sequence to sequence modeling, that is converting a sequence of tokens in the source language into tokens in the target language.

Human language is very nuanced and it would be extremely difficult for a programmer to write a set of rules to fully capture its complexity. Neural networks trained on a large amount of data are able to capture this nuance much better using a process that is roughly analogous to what neurons in our brains do.

Argos Translate uses Transformer models, a neural network architecture with an attention mechanism that can be trained in parallel more efficiently than past approaches. Models are trained using OpenNMT, an open source neural machine translation system.

To run the models Argos Translate uses CTranslate2 an optimized inference engine that supports both CPU and GPU execution. Fast CPU execution without the need for specialized hardware is important because it allows for simplified server deployment and local translations for enhanced privacy.

Argos Translate also supports pivoting through an intermediate language to perform translations that aren’t directly installed. For example, if Portuguese to English and English to Korean models were installed you could translate from Portuguese to Korean by passing through English.

Argos Translate has a desktop application based on PyQt and the LibreTranslate project has a web application.

The primary source of data for Argos Translate is the Opus parallel corpus which has compiled a number of open data sources in a standard format for easy access. Wiktionary definition data is also used.

The training scripts for Argos Translate load and filter the data for training. Emojis are removed from the training data to preserve emoji integrity in translations by preventing the sequence to sequence model from modifying them. There is also support for adding “special tokens” to the data to add functionality to the model. For example, the <define> token can be used with Wiktionary data to improve translation quality in languages without a large quantity of data available and to improve single word translations.

Finally, the training scripts package trained models in a standard format for use.

The sequence to sequence model operates on a series of discreet tokens. In theory you could make each character its own token. However, Argos Translate uses the SentencePiece tokenizer to split input text into sub-word tokens to take some of the burden of spelling words off of the sequence to sequence model. By splitting into sub-words instead of full words the sequence to sequence model is still able to understand uncommon compound words.

Currently the sequence to sequence model is only able to translate individual sentences meaning input text needs to be split into sentences. There are techniques for doing this effectively using periods, however, these techniques wouldn’t work for languages without periods. Instead Argos Translate uses Stanza which uses neural networks to do sentence boundary detection in a large number of languages.

Argos Translate has a package manager and package index with pretrained models. Trained models are packaged with metadata and the files needed by Stanza and SentencePiece for easy distribution and installation. Packages can either be installed manually from a file or automatically downloaded from the package index using the Python library or desktop GUI.

Packages have peer to peer links for downloading models using either IPFS or BitTorrent. Peer to peer distribution of models reduces the cost of distribution and is more resilient without a single point of failure.

LibreTranslate is an API and web app built on top of Argos Translate using Python and the Flask framework. LibreTranslate can be run from a Docker container and has language bindings for Rust, C#, and NodeJS. It also supports detecting languages, and text in scripts not present in the dataset such as Russian written using the Latin alphabet.

Together these projects provide a powerful, extensible, open source translation stack with many potential applications. What are you going to build with it?

CC0 1.0 Universal (CC0 1.0): https://creativecommons.org/publicdomain/zero/1.0/

91

views

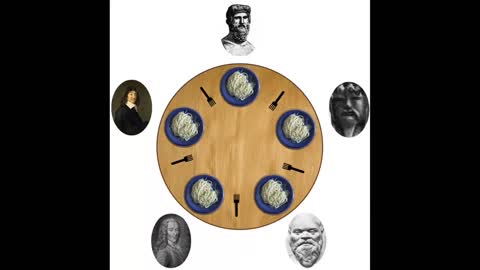

Deadlocks and the Dining Philosophers Problem

In this video we will cover the “Dining Philosophers” problem.

The “Dining Philosophers” problem is an example problem to demonstrate concurrent algorithm design.

A group of philosophers sit around a table and alternate between thinking and eating using the forks on their left and right. The forks represent a shared resource between the pair of philosophers on either side of them. Philosophers need both forks to eat and only one philosopher can use a fork at a time.

If the philosophers were to simply take forks as they needed them a situation could occur where a circle of philosophers are each holding one fork and waiting on another philosopher to give up a fork. This is referred to as a “deadlock”.

A simple solution to this problem is to add a waiter, who represents a lock, that the philosophers need exclusive access to before picking up either of their forks. Once a philosopher has exclusive access to the waiter’s attention they have that attention until the philosopher has successfully picked up both forks. When a philosopher has exclusive access to the waiter they will succeed in picking up their forks either because both forks are available, and no other philosophers have the waiter's attention, or they will wait with the waiter’s attention for the philosophers on either side of them to give up their forks.

This solution of using a central arbitrator to manage access prevents a circular cycle of philosophers holding one fork while waiting on another philosopher for their other fork that causes a deadlock. This solution is fair because all of the philosophers have equal access to the waiter. However, it can be inefficient because philosophers have to wait for the waiter even when both of their forks are available.

Reference:

Dining philosophers image: bdesham - https://commons.wikimedia.org/wiki/File:An_illustration_of_the_dining_philosophers_problem.png

7

views

Training an Argos Translate model tutorial (2022)

Training an Argos Translate model using Argos Train. Argos Translate models are based on OpenNMT, an open source translation software project. Argos Translate models can be used in the Argos Translate GUI or from LibreTranslate, an open source web application and API.

Argos Train: https://github.com/argosopentech/argos-train

Argos Open Tech: https://www.argosopentech.com/

OpenNMT: https://opennmt.net/

LibreTranslate: https://libretranslate.com/

3

views

Byzantine Generals Problem, Bitcoin, and Proof of Stake

Byzantine Generals: https://www.cs.cornell.edu/courses/cs6410/2018fa/slides/18-distributed-systems-byzantine-agreement.pdf

Merkle Trees: https://en.wikipedia.org/wiki/Merkle_tree

Bitcoin: https://bitcoin.org/bitcoin.pdf

Proof of Stake: https://ethereum.org/en/developers/docs/consensus-mechanisms/pos/

8

views

A Contamination Theory of the Obesity Epidemic

Update 2022-02-09: I've updated my beliefs on contamination as a primary cause of the obesity epidemic. I think a better model is that our bodies have a set weight they try to maintain and will return to that point if we move very far from it. This is why people that lose weight often gain it back, and other people struggle to gain weight at all. The obesity epidemic is then the result of people's weight set points gradually moving up due to a breakdown in this self-regulation mechanism caused by easily accessible hyper-palatable non-satiating processed food and general poor health.

https://slatestarcodex.com/2017/04/25/book-review-the-hungry-brain/

In this video we will be discussing a contamination theory of the obesity epidemic, which is the theory that the large increase in obesity seen in the industrialized world since 1950 is largely caused by contaminants in our food and water.

There has been a very dramatic increase in obesity since the 1950s that has accelerated since 1970. In the 1800s the average U.S. man weighed 155lbs (70kg) while today in 2021 he weighs 195lbs (88kg). One possible explanation is that we evolved for an environment without widely available high calorie food, and now eat too much of them. However, in my opinion the evidence better supports contamination as a larger cause.

Modern hunter gatherers eat a wide range of diets, often without much variety, and consistently have healthy weights. Some eat large amounts of carbs, others proteins and fats, but they don’t have anywhere near the level of obesity seen in industrialized countries today. Additionally, immigrants to the United States from less industrialized countries generally have lower rates of obesity when they arrive, but become more obese while living in America. The obesity epidemic isn’t just in humans either, wild animals, lab animals, and zoo animals have all gotten fatter even under controlled lab or zoo conditions.

Obesity can be induced in lab rats by feeding them a diet of highly processed and palatable human food.

Notably higher altitudes are correlated with lower rates of obesity. One possible explanation for this correlation is that contaminants build up in the water supply as they flow downstream.

Comparing the rates of obesity geographically in the United States, obesity increases as you follow the Mississippi watershed from the mountains in Colorado, with one of the lowest obesity rates, to the Mississippi’s mouth in Louisiana, one of the most obese states in the nation.

Many believe that carbs or sugar cause obesity, however U.S. carbs and sugar intake have decreased since 2000 while obesity has continued to increase.

The question then is what contaminants could be causing this? There are a number of theories including Lithium, livestock antibiotics, PFAS chemicals in industrial use, Glyphosate in herbicides, or a combination of contaminants. We would expect the relevant contaminants to have increased since 1950, and dramatically increased since 1970. Lithium fits this description, and is known to cause obesity when ingested in sufficient quantity, but there isn’t conclusive evidence for any individual contaminant.

If this is true then what can be done? Since it isn’t known exactly what is causing the obesity epidemic it’s hard to say, but trying to reduce likely contaminants is probably a good strategy. Highly processed food is designed by food manufacturers to be addictive, causes obesity in lab rats, and potentially picks up more contamination with more processing. Replacing processed foods with unprocessed ones like potatoes, fruits, vegetables, and nuts would likely reduce contamination among other health benefits. Contaminants could also bioaccumulate in animals so eating less or higher quality meat may reduce contaminants, and vegetarians currently have lower obesity rates then the general public. Drinking distilled or purified water or getting water from a high elevation close to it’s source could reduce potential contaminants in drinking water. Finally, living in an area with a lower obesity rate could reduce contaminants and socially expose you to healthier habits.

For more information I recommend reading Slime Mold Time Mold’s writings on this subject, which curates the current research in a digestible format.

https://slimemoldtimemold.com

153

views